|

The creation of new tables and extending them with new columns is supported. Capabilities and limitations DDL - Data Definition Language This is because in this example, we have set the upsert option to false, which makes our sink add new rows instead of replacing existing ones like in the earlier example. What you might have noticed, is that we have multiple entries for the same user_id which was defined as a key. Select * from "balance" order by timestamp

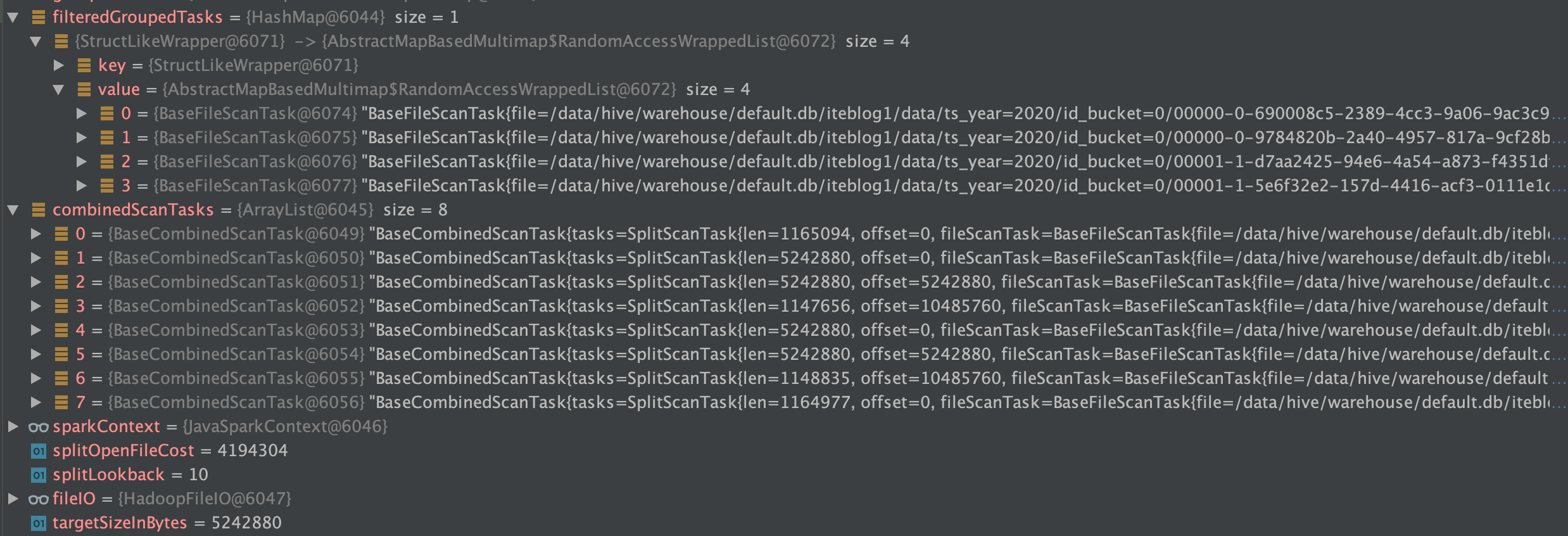

What is left is to run the Data Online Generator "topics": "clickstream,trx,balance,loan", Please note that optionality of that field is set to False and the field that is used for a key also has to be present as part of the value. Next, in order to define the primary key in the Apache Iceberg table we also need to send it as part of the Kafka event key. We have to define structure, values and metadata containing the database, table name and type of operation.ĭef balance_value_function(timestamp: int, subject: User, transition: Transition) -> str: One of the events in our example is a current snapshot of the user balance that contains a user_id which is also a primary key, current balance and timestamp of the last change.įirst we need to change the event format in Debezium. The format contains both before and after states of a change, but our sink is only interested in the after state so we will be skipping the before part. First Step: Data formatĪs stated above, Kafka Connect Apache Iceberg Sink consumes data in the format used by Debezium, so we need to transform our data into it. As output we will have a stream of user clicks in the application, the transaction carried out, current balance and loan information, if one is taken. As a reminder, we are looking at simulated user behavior that interacts with a banking application, can receive income, spend money and take a loan. We will be using the exact same scenario as described in that blog post. Today, we will use it to generate simulated real-time data and stream it to Apache Iceberg tables. In our blog post: “ Data online generation for event stream processing”, we showcased a tool developed in GetInData for data generation based on state machines. Select * from debeziumcdc_postgres_public_dbz_test order by timestamp desc Īpache Iceberg: Real-time ingestion example with Data online generator Now we can open a psql client and create some tables and data "iceberg.fs.s3a.impl": ".s3a.S3AFileSystem" "iceberg.fs.defaultFS": "s3a://gid-streaminglabs-eu-west-1/dbz_iceberg/gl_test", "iceberg.warehouse": "s3a://gid-streaminglabs-eu-west-1/dbz_iceberg/gl_test", "iceberg.catalog-impl": ".glue.GlueCatalog", "table.namespace": "gid_streaminglabs_eu_west_1_dbz", First step: Run Kafka Connectįirst authenticate and store AWS credentials in a file, for example ~/.aws/configĬurl -X POST -H "Content-Type: application/json" \ Later we will use Amazon Athena to read and display the data.

A Kafka topic will be used to communicate between them and sink will be writing data to S3 bucket and metadata to Amazon Glue. We will run a Kafka Connect instance on which we will deploy Debezium source and our Apache Iceberg sink. Let's try to use our sink to replicate the PostgreSQL database using Debezium to capture all changes and stream it to the Apache Iceberg table. You can read more about this format here. The Apache Iceberg sink was created based on the memiiso/debezium-server-iceberg which was created for stand-alone usage with the Debezium Server.ĭata format that is consumed by Apache Iceberg has to represent table-like data and its schema, therefore we used a format created by Debezium for change data capture. You can find the repository and released package on our GitHub. Kafka Connect Apache Iceberg sinkĪt GetInData we have created an Apache Iceberg sink that can be deployed on a Kafka Connect instance. It's worth mentioning that Apache Iceberg can be used with any cloud provider or in-house solution that supports Apache Hive metastore and blob storage. Apache Iceberg provides mechanisms for read-write isolation and data compaction out of the box, to avoid small file problems. This technology can be used not only in batch processing but can also be a great tool to capture real-time data that comes from user activity, metrics, logs, from change data capture or other sources.

You can read more about Apache Iceberg and how to work with it in a batch job environment in our blog post “Apache Spark with Apache Iceberg - a way to boost your data pipeline performance and safety“ written by Paweł Kociński. Apache Iceberg is an open table format for huge analytics datasets which can be used with commonly-used big data processing engines such as Apache Spark, Trino, PrestoDB, Flink and Hive.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed